The Rationalist's Guide to the Galaxy: Superintelligent AI and the Geeks Who Are Trying to Save Humanity's Future

by Tom Chivers · 12 Jun 2019 · 289pp · 92,714 words

Effective Altruists, and 22 per cent had made ‘donations they otherwise wouldn’t’ because of Effective Altruism.7 Two other OpenPhil employees, Helen Toner and Ajeya Cotra – whom you’ll meet shortly – told me that they’d either come to Effective Altruism through LessWrong or found the two at the same time

…

the OpenPhil work that is most relevant to this book is its focus on global catastrophic risks, and especially AI. Chapter 39 EA and AI Ajeya Cotra is a research analyst for OpenPhil. She and her colleague Helen Toner, an Australian, both work on AI risk specifically, and both say that it

…

’s DeepMind is explicitly worried about it; co-founders Shane Legg and Demis Hassabis both take it seriously. ‘It’s been amazingly quick progress,’ commented Ajeya Cotra, of OpenPhil. ‘I think the discourse around technical safety of these in 2014, compared to now, feels like different worlds.’ Bostrom’s book Superintelligence was

…

-building point of view, Yudkowsky et al. have been extraordinarily successful. ‘There are well-known, well-respected machine-learning researchers involved now,’ said Helen Toner, Ajeya Cotra’s OpenPhil colleague. ‘How to build this field is to make it something young people feel comfortable going into – not just starry-eyed Rationalists who

…

take out some of the sillier jokes. Linden Lawson did a sterling job clearing up my waffly and repetitious prose. I owe great thanks to Ajeya Cotra, Andrew Sabisky, Anna Salamon, Buck Shlegeris, Catherine Hollander, David Gerard, Diana Fleischman, Helen Toner, Holden Karnofsky, Katja Grace, Michael Story, Mike Levine, Murray Shanahan, Nick

What We Owe the Future: A Million-Year View

by William MacAskill · 31 Aug 2022 · 451pp · 125,201 words

all domains, just as current AI systems far outperform humans at chess and Go. And this poses a major challenge. To borrow an analogy from Ajeya Cotra, a researcher at Open Philanthropy, think of a child who has just become the ruler of a country.78 The child can’t run the

…

the International Energy Agency since 2006. Graph shows capacity growth per year (rather than cumulative total). The most weighty evidence for this is marshalled by Ajeya Cotra. Her report forecasts trends in computing power over time and compares those trends to the computing power of the brains of biological creatures and the

On the Edge: The Art of Risking Everything

by Nate Silver · 12 Aug 2024 · 848pp · 227,015 words

are kept in check. And the people making the big calls about what happens are a coalition of AI systems.” —As told to me by Ajeya Cotra, an AI researcher at Open Philanthropy These definitions make a big difference. Cotra, for instance, has a p(doom) of 20 to 30 percent. “You

…

propose some principles to guide us through the next decades and hopefully far beyond. The Steelman Case for a High p(doom) When I asked Ajeya Cotra for her capsule summary for why we should be concerned about AI risk, she gave me a pithy answer. “If you were to tell a

The Optimist: Sam Altman, OpenAI, and the Race to Invent the Future

by Keach Hagey · 19 May 2025 · 439pp · 125,379 words

soon join the Biden administration’s Commerce Department as part of a new division overseeing AI policy. The OpenAI board did interview Christiano’s wife, Ajeya Cotra, an AI safety expert at Open Philanthropy and the founder of an EA student group at Berkeley, for the post, but the process stalled out

Supremacy: AI, ChatGPT, and the Race That Will Change the World

by Parmy Olson · 284pp · 96,087 words

referred to how people quantified the risk of an AI doomsday. Someone with a more optimistic outlook might put their p(doom) at 5 percent. Ajeya Cotra, a research analyst at Open Philanthropy who helped decide grant-making, told one podcast that hers was between 20 and 30 percent. Nobody knew Bankman

The Alignment Problem: Machine Learning and Human Values

by Brian Christian · 5 Oct 2020 · 625pp · 167,349 words

. See Paul Christiano, “A Formalization of Indirect Normativity,” AI Alignment (blog), April 20, 2012, https://ai-alignment.com/a-formalization-of-indirect-normativity-7e44db640160, and Ajeya Cotra, “Iterated Distillation and Amplification,” AI Alignment (blog), March 4, 2018, https://ai-alignment.com/iterated-distillation-and-amplification-157debfd1616. 99. For an explicit discussion of

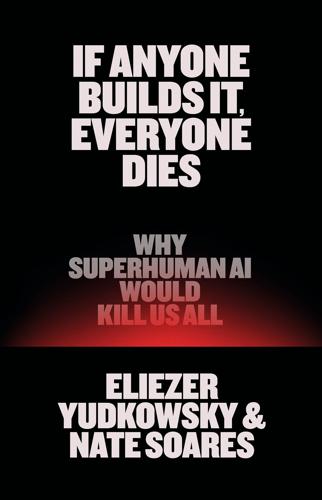

If Anyone Builds It, Everyone Dies: Why Superhuman AI Would Kill Us All

by Eliezer Yudkowsky and Nate Soares · 15 Sep 2025 · 215pp · 64,699 words

‘Deep Learning’ Is a Mandate for Humans, Not Just Machines,” Wired, May 5, 2015, accessed March 15, 2025, via web.archive.org. 21. analysts said: Ajeya Cotra, “Draft Report on AI Timelines,” Alignment Forum, September 18, 2020, alignmentforum.org. 22. one to nine years: Sam Altman, “The Intelligence Age,” September 23, 2024