Code Dependent: Living in the Shadow of AI

by Madhumita Murgia · 20 Mar 2024 · 336pp · 91,806 words

-profit enterprise that sold AI technologies to large corporations and governments around the world.4 OpenAI’s crown jewel was an algorithm called GPT – the Generative Pre-trained Transformer – software that could produce text-based answers in response to human queries. One of the authors of the ‘Attention Is All You Need’ paper, Lukasz

…

, ref7, ref8, ref9, ref10 AI alignment and ref1, ref2, ref3 ChatGPT see ChatGPT creativity and ref1, ref2, ref3, ref4 deepfakes and ref1, ref2, ref3 GPT (Generative Pre-trained Transformer) ref1, ref2, ref3, ref4 job losses and ref1 ‘The Machine Stops’ and ref1 Georgetown University ref1 gig work ref1, ref2, ref3, ref4, ref5 Amsterdam court

…

ref1 Sama ref1 Search ref1, ref2, ref3, ref4, ref5 Transformer model and ref1 Translate ref1, ref2, ref3, ref4 Gordon’s Wine Bar London ref1 GPT (Generative Pre-trained Transformer) ref1, ref2, ref3, ref4 GPT-4 ref1 Graeber, David ref1 Granary Square, London ref1, ref2 ‘graveyard of pilots’ ref1 Greater Manchester Coalition of Disabled People

The Age of AI: And Our Human Future

by Henry A Kissinger, Eric Schmidt and Daniel Huttenlocher · 2 Nov 2021 · 194pp · 57,434 words

also detected aspects of reality humans have not detected, or perhaps cannot detect. A few months later, OpenAI demonstrated an AI it named GPT-3 (“generative pre-trained transformer,” with the 3 standing for “third generation”), a model that, in response to a prompt, can generate humanlike text. Given a partial phrase, it can

Empire of AI: Dreams and Nightmares in Sam Altman's OpenAI

by Karen Hao · 19 May 2025 · 660pp · 179,531 words

summarizing or answering questions about a document, than anything else he had tried before. In 2018, OpenAI released the first version of that model, called Generative Pre-Trained Transformer, later nicknamed GPT-1. The second word in the name—pre-trained—is a technical term within AI research that refers to training a model

…

, 71–72, 132–33, 246 Gawker Media, 38 GDPR (General Data Protection Regulation), 136 Gebru, Timnit, 24, 52–53, 108, 160–70, 171–73, 414 Generative Pre-Trained Transformers. See GPT Genius Makers (Metz), 80 Geometric Intelligence, 110 Ghost Work (Gray and Suri), 193–94 Gibstine, Connie, 29–31, 44, 327–28, 331–32

Literary Theory for Robots: How Computers Learned to Write

by Dennis Yi Tenen · 6 Feb 2024 · 169pp · 41,887 words

, 70 freedom, concept of, 116 Garcia-Molina, Hector, 113 gears, 14, 49, 52–53 general intelligence, 37–38 generative grammars, 87, 88, 91, 94, 97 generative pre-trained transformers (GPT), 139 German language, 121 Germany, 26, 34 Gibson, Kevin, 127 global positioning system (GPS), 15 Godard, Jean-Luc, 93 Goethe, Johann Wolfgang von, 65

…

Gore, Al, 17 GPS (global positioning system), 15 GPT (generative pre-trained transformers), 139 GPT-4 algorithm, 129, 130 grammar(s), 21, 38, 40–42, 85–88, 90–95, 97, 102, 108, 113–14 Green, Bert, 92 Grimm

Supremacy: AI, ChatGPT, and the Race That Will Change the World

by Parmy Olson · 284pp · 96,087 words

model experiments in two weeks than over the previous two years. He and his colleagues started working on a new language model they called a “generatively pre-trained transformer” or GPT for short. They trained it on an online corpus of about seven thousand mostly self-published books found on the internet, many of

Why Machines Learn: The Elegant Math Behind Modern AI

by Anil Ananthaswamy · 15 Jul 2024 · 416pp · 118,522 words

was called a transformer, a type of architecture that’s especially suited to processing sequential data. LLMs such as ChatGPT are transformers; GPT stands for “generative pre-trained transformer.” Given a sequence of, say, ten words and asked to predict the next most plausible word, a transformer has the ability to “pay attention” to

AI 2041: Ten Visions for Our Future

by Kai-Fu Lee and Qiufan Chen · 13 Sep 2021

arrival and departure times, and a great deal more. After Google’s transformer work, a more well-known extension called GPT-3 (GPT stands for “generative pre-trained transformers”) was released in 2020 by OpenAI, a research laboratory founded by Elon Musk and others. GPT-3 is a gigantic sequence transduction engine that learned

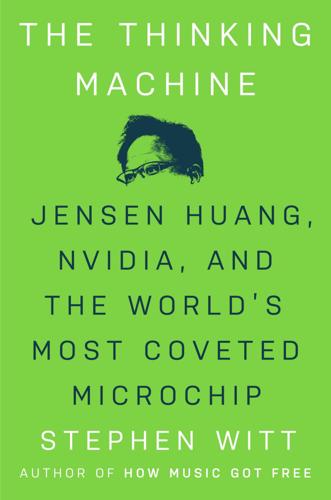

The Thinking Machine: Jensen Huang, Nvidia, and the World's Most Coveted Microchip

by Stephen Witt · 8 Apr 2025 · 260pp · 82,629 words

a large collection of text. Then it would generate text of its own. Combining the purpose, the method, and the architecture, you arrived at the “Generative Pre-Trained Transformer,” or GPT. GPT-1 launched in June 2018. It learned to read using BookCorpus, a collection of around seven thousand free, self-published ebooks. (Sci

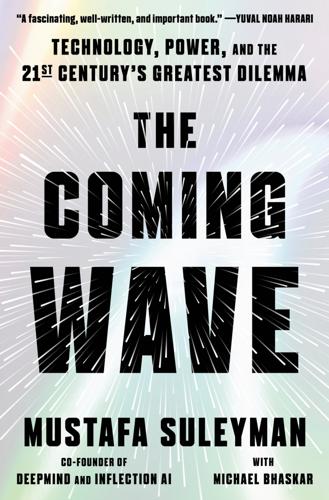

The Coming Wave: Technology, Power, and the Twenty-First Century's Greatest Dilemma

by Mustafa Suleyman · 4 Sep 2023 · 444pp · 117,770 words

researchers published the first paper on them in 2017, the pace of progress has been staggering. Soon after, OpenAI released GPT-2. (GPT stands for generative pre-trained transformer.) It was, at the time, an enormous model. With 1.5 billion parameters (the number of parameters is a core measure of an AI system

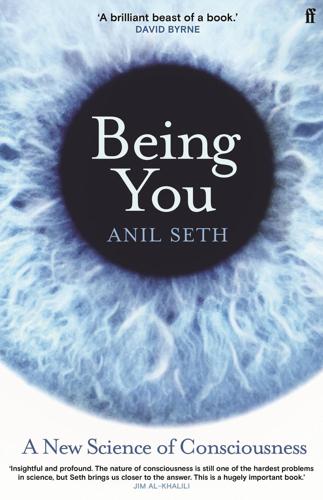

Being You: A New Science of Consciousness

by Anil Seth · 29 Aug 2021 · 418pp · 102,597 words

.com/2015/09/21/technology/personaltech/software-is-smart-enough-for-sat-but-still-far-from-intelligent.html. vast artificial neural network: GPT stands for ‘Generative Pre-trained Transformer’ – a type of neural network specialised for language prediction and generation. These networks are trained using an unsupervised deep learning approach essentially to ‘predict the

The Optimist: Sam Altman, OpenAI, and the Race to Invent the Future

by Keach Hagey · 19 May 2025 · 439pp · 125,379 words

Searches: Selfhood in the Digital Age

by Vauhini Vara · 8 Apr 2025 · 301pp · 105,209 words

This Is for Everyone: The Captivating Memoir From the Inventor of the World Wide Web

by Tim Berners-Lee · 8 Sep 2025 · 347pp · 100,038 words

System Error: Where Big Tech Went Wrong and How We Can Reboot

by Rob Reich, Mehran Sahami and Jeremy M. Weinstein · 6 Sep 2021

Power and Progress: Our Thousand-Year Struggle Over Technology and Prosperity

by Daron Acemoglu and Simon Johnson · 15 May 2023 · 619pp · 177,548 words

How to Spend a Trillion Dollars

by Rowan Hooper · 15 Jan 2020 · 285pp · 86,858 words