Data Mining: Concepts, Models, Methods, and Algorithms

by Mehmed Kantardzić · 2 Jan 2003 · 721pp · 197,134 words

mining. There have been many techniques used to model global or local temporal events. We will introduce only some of the most popular modeling techniques. Finite State Machine (FSM) has a set of states and a set of transitions. A state may have transitions to other states that are caused by fulfilling

…

well when the transitions are not precise and does not scale well when the set of symbols for sequence representation is large. Figure 12.20. Finite-state machine. (a) State-transition table; (b) state-transition diagram. Markov Model (MM) extends the basic idea behind FSM. Both FSM and MM are directed graphs

…

traditional algorithms for frequent itemsets detection. Counting serial itemsets, on the other hand, requires more computational resources. For example, unlike for parallel itemsets, we need finite-state automata to recognize serial episodes. More specifically, an appropriate l-state automaton can be used to recognize occurrences of an l-node serial sequence. For

…

B A B C B A B A B B C B A C C}: (a) Find the longest subsequence with frequency ≥ 3. (b) Construct finite-state automaton (FSA) for the subsequence found in (a). 7. Find normalized contiguity matrix for the table of U.S. cities: Minneapolis Chicago New York Nashville

Artificial Intelligence: A Modern Approach

by Stuart Russell and Peter Norvig · 14 Jul 2019 · 2,466pp · 668,761 words

when written in a factored-representation language such as propositional logic and around 1038 pages when written in an atomic language such as that of finite-state automata. On the other hand, reasoning and learning become more complex as the expressive power of the representation increases. To gain the benefits of expressive

…

anywhere in the state space; therefore a complete algorithm must be capable of systematically exploring every state that is reachable from the initial state. In finite state spaces that is straightforward to achieve: as long as we keep track of paths and cut off ones that are cycles (e.g. Arad to

…

are lavender, and potential future nodes have faint dashed lines. Expanded nodes with no descendants in the frontier (very faint lines) can be discarded. For finite state spaces that are trees it is efficient and complete; for acyclic state spaces it may end up expanding the same state many times via different

…

-first and breadth-first search. Like depth-first search, its memory requirements are modest: O(bd) when there is a solution, or O(bm) on finite state spaces with no solution. Like breadth-first search, iterative deepening is optimal for problems where all actions have the same cost, and is complete on

…

finite acyclic state spaces, or on any finite state space when we check nodes for cycles all the way up the path. Figure 3.12 Iterative deepening and depth-limited tree-like search. Iterative

…

versions which don’t check for repeated states. For graph searches which do check, the main differences are that depth-first search is complete for finite state spaces, and the space and time complexities are bounded by the size of the state space (the number of vertices and edges, |V | + |E|). Figure

…

search for Bucharest with the straight-line distance heuristic hSLD. Nodes are labeled with their h-values. Greedy best-first graph search is complete in finite state spaces, but not in infinite ones. The worst-case time and space complexity is O(|V|). With a good heuristic function, however, the complexity can

…

the earlier incarnation of the current state, so the new incarnation can be discarded. With this check, we ensure that the algorithm terminates in every finite state space, because every path must reach a goal, a dead end, or a repeated state. Notice that the algorithm does not check whether the current

…

by the work of John Koza (1992, 1994), but it goes back at least to early experiments with machine code by Friedberg (1958) and with finite-state automata by Fogel et al. (1966). As with genetic algorithms, there is debate about the effectiveness of the technique. Koza et al. (1999) describe experiments

…

that “infinite horizon” does not necessarily mean that all state sequences are infinite; it just means that there is no fixed deadline. There can be finite state sequences in an infinite-horizon MDP that contains a terminal state. The next question we must decide is how to calculate the utility of state

…

, so it is a solution to the Bellman equations, and πi must be an optimal policy. Because there are only finitely many policies for a finite state space, and each iteration can be shown to yield a better policy, policy iteration must terminate. The algorithm is shown in Figure 16.9. As

…

) state space. This means we will have to redesign the dynamic programming algorithms from Sections 16.2.1 and 16.2.2, which assumed a finite state space and a finite number of actions. Here we describe a value iteration algorithm designed specifically for POMDPs, followed by an online decision-making algorithm

…

of POMDP value iteration. Other algorithms soon followed, including an approach due to Hansen (1998) that constructs a policy incrementally in the form of a finite-state automaton whose states define the possible belief states of the agent. More recent work in AI has focused on point-based value iteration methods that

…

for games that will be played an infinite number of rounds. For this reason, it is standard to represent strategies for infinitely repeated games as finite state machines (FSMs) with output. Figure 17.3 illustrates a number of FSM strategies for the iterated prisoner’s dilemma. Consider the Tit-for-Tat strategy

…

1. Row 3: Column 1, 0.8516. Column 2, 0.9078. Column 3, 0.9578. Column 4, positive 1. Figure 17.3Some common, colorfully named finite-state machine strategies for the infinitely repeated prisoner’s dilemma. The HAWK and DOVE strategies are simpler: HAWK simply plays testify on every round, while DOVE

…

to compute the average over the finite repeating sequence. In what follows, we will assume that players in an infinitely repeated game simply choose a finite state machine to play the game on their behalf. We don’t impose any constraints on these machines: they can be as big and elaborate as

…

players want. When all players have chosen a finite state machine to play on their behalf, then we can compute the payoffs for each player using the limit of means approach as described above. In

…

might have adopted it. We can also get different solutions by changing the agents, rather than changing the rules of engagement. Suppose the agents are finite state machines with n states and they are playing a game with m > n total steps. The agents are thus incapable of representing the number of

…

extensively by Axelrod (1985) and Poundstone (1993). Repeated games were introduced by Luce and Raiffa (1957), and Abreu and Rubinstein (1988) discuss the use of finite state machines for repeated games—technically, Moore machines. The text by Mailath and Samuelson (2006) concentrates on repeated games. Games of partial information in extensive form

…

particular properties.” Norvig (2009) gives some examples of tasks that can be accomplished with n-gram models. Chomsky (1956, 1957) pointed out the limitations of finite-state models compared with context-free models, concluding, “Probabilistic models give no particular insight into some of the basic problems of syntactic structure.” This is true

…

gone too far (Church and Hestness, 2019). Early linguists concentrated on actual language usage data, including frequency counts. Noam Chomsky (1956) demonstrated the limitations of finite-state models, leading to an emphasis on theoretical studies of syntax, disregarding actual language performance. This approach dominated for twenty years, until empiricism made a comeback

…

render the resulting path planning problem computationally difficult. Figure 26.32(a) Genghis, a hexapod robot. (Image courtesy of Rodney A. Brooks.) (b) An augmented finite state machine (AFSM) that controls one leg. The AFSM reacts to sensor feedback: if a leg is stuck during the forward swinging phase, it will be

…

motion is blocked, simply retract it, lift it higher, and try again. The resulting controller is shown in Figure 26.32(b) as a simple finite state machine; it constitutes a reflex agent with state, where the internal state is represented by the index of the current machine state (s1 through s4

…

). 26.9.2Subsumption architectures The subsumption architecture (Brooks, 1986) is a framework for assembling reactive controllers out of finite state machines. Nodes in these machines may contain tests for certain sensor variables, in which case the execution trace of a

…

finite state machine is conditioned on the outcome of such a test. Arcs can be tagged with messages that will be generated when traversing them, and that

…

are sent to the robot’s motors or to other finite state machines. Additionally, finite state machines possess internal timers (clocks) that control the time it takes to traverse an arc. The resulting machines are called augmented

…

finite state machines (AFSMs), where the augmentation refers to the use of clocks. An example of a simple AFSM is the four-state machine we just talked

…

). A Bayesian approach to relevance in game playing. AIJ, 97, 195–242. Baum, L. E. and Petrie, T. (1966). Statistical inference for probabilistic functions of finite state Markov chains. Annals of Mathematical Statistics, 41, 1554–1563. Baxter, J. and Bartlett, P. (2000). Reinforcement learning in POMDPs via direct gradient ascent. In ICML

…

-linear models. In ACL-04. Clarke, A. C. (1968). 2001: A Space Odyssey. Signet. Clarke, E. and Grumberg, O. (1987). Research on automatic verification of finite-state concurrent systems. Annual Review of Computer Science, 2, 269–290. Clearwater, S. H. (Ed.). (1996). Market-Based Control. World Scientific. Clocksin, W. F. and Mellish

…

, 23–41. Robinson, S. (2002). Computer scientists find unexpected depths in airfare search problem. SIAM News, 35(6). Roche, E. and Schabes, Y. (Eds.). (1997). Finite–State Language Processing. Bradford Books. Rock, I. (1984). Perception. W. H. Freeman. Rokicki, T., Kociemba, H., Davidson, M., and Dethridge, J. (2014). The diameter of the

…

, 1100 ADP (adaptive dynamic programming), 844, 869 adversarial example, 821, 838 adversarial search, 192 adversarial training, 975 adversary argument, 154 Advice Taker, 37 AFSM (augmented finite state machine), 976 Agarwal, P. 974, 986, 1041, 1105, 1112 agent, 21, 54, 78 active learning, 848 architecture of, 65, 1069 autonomous, 228 benevolent, 589 components

…

second-price, 626 truth-revealing, 625 Vickrey, 626 Audi, R., 1058, 1086 Auer, P., 587, 1086 Auer, S., 334, 357, 1088, 1103 augmentation, 902 augmented finite state machine (AFSM), 976 augmented grammar, 892 Aumann, R., 637, 1086 AURA (theorem prover), 327, 331 Auray, J. R, 549, 1087 Austerweil, J. L., 872, 1099

…

.E., 79, 162, 296, 398, 400, 401, 983, 1095 filtering, 150, 353, 484–485, 514, 578, 795, 938 assumed-density, 517 Fine, S., 516, 1095 finite state machine, 604 Fink, D., 137, 161, 1106 Finkelstein, L., 191, 1089 Finn, C., 737, 841, 986, 1095, 1103 Finney, D. I., 473, 1095 Firat, O

Erlang Programming

by Francesco Cesarini · 496pp · 70,263 words

102 104 106 107 108 110 112 114 115 5. Process Design Patterns . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 117 Client/Server Models A Client/Server Example A Process Pattern Example Finite State Machines An FSM Example A Mutex Semaphore Event Managers and Handlers A Generic Event Manager Example Event Handlers Exercises 118 119 125 126 127 129

…

will be informed, and then can either handle the crash or choose to crash itself. • OTP provides a number of generic behaviors, such as servers, finite state machines, and event handlers. These worker processes have built-in robustness, since they handle all the general (and therefore difficult) concurrent parts of these patterns

…

availability. Requests to this server will allow clients (usually implemented as Erlang processes) to access these resources and services. Another very common pattern deals with finite state machines, also referred to as FSMs. Imagine a process handling events in an instant messaging (IM) session. This process, or

…

finite state machine as we should call it, will be in one of three states. It could be in an offline state, where the session with the

…

three categories. In this chapter, we will look at examples of process design patterns, explaining how they can be used to 117 program client/servers, finite state machines, and event handlers. An experienced Erlang programmer will recognize these patterns in the design phase of the project and use libraries and templates that

…

window and all the widgets associated with it. The loop function is not called, allowing the process to terminate normally. Finite State Machines Erlang processes can be used to implement finite state machines. A finite state machine, or FSM for short, is a model that consists of a finite number of states and events. You can

…

state of the FSM, a set of actions and a transition to a new state will occur (see Figure 5-5). Figure 5-5. A finite state machine In Erlang, each state is represented as a tail-recursive function, and each event is represented as an incoming message. When a message is

…

achieved by calling the function corresponding to the new state. An FSM Example As an example, think of modeling a fixed-line phone as a finite state machine (see Figure 5-6). The phone can be in the idle state when it is plugged in and waiting either for an incoming phone

…

Erlang handles them † Or any other relative of your choice who tends to call you very early on a Saturday morning. Finite State Machines | 127 Figure 5-6. Fixed-line phone finite state machine graciously is not a surprise. When prototyping with the early versions of Erlang between 1987 and 1991, it was the

…

Plain Old Telephony System (POTS) finite state machines described in this section that the development team used to test their ideas of what Erlang should look like. With a tail-recursive function

…

() -> ... We leave the coding of the functions for the other states as an exercise. A Mutex Semaphore Let’s look at another example of a finite state machine, this time implementing a mutex semaphore. A semaphore is a process that serializes access to a particular resource, guaranteeing mutual exclusion. Mutex semaphores might

…

to denote events. And before reading on, try to figure out what the terminate function should do to clean up when the mutex is terminated. Finite State Machines | 129 Figure 5-8. The mutex message sequence diagram -module(mutex). -export([start/0, stop/0]). -export([wait/0, signal/0]). -export([init/0

…

, or terminating, making its supervisor resolve the problem. Supervisors should behave in a similar manner, irrespective of what the system does. Together with clients/servers, finite state machines, and event handlers, they are considered a process design pattern: • The generic part of the supervisor starts the children, monitors them, and restarts them

…

to the other process, causing it to terminate as well. Exercise 6-2: A Reliable Mutex Semaphore Suppose that the mutex semaphore from the section “Finite State Machines” on page 126 in Chapter 5 is unreliable. What happens if a process that currently holds the semaphore terminates prior to releasing it? Or

…

processing, and supervisors, whose task is to monitor workers and other supervisors. Worker behaviors, often denoted in diagrams as circles, include servers, event handlers, and finite state machines. Supervisors, denoted in illustrations as squares, monitor their children, both workers and other supervisors, creating what is called a supervision tree (see Figure 12

…

. The children that make up the supervision tree include both supervisors and worker processes. Worker processes are OTP behaviors including gen_server, gen_fsm (supporting finite state machine behavior), and gen_event (which provides event-handling functionality). Worker processes have to link themselves to the supervisor behavior and handle specific system messages

…

in more detail when working with generic servers, supervisors, and applications. Behaviors we have not covered but which we briefly introduced in this chapter include finite state machines, event handlers, and special processes. All of these behavior library modules have manual pages that you can reference. In addition, the Erlang documentation has

…

a section on OTP design principles that provides more details and examples of these behaviors. Finite state machines are a crucial component of telecom systems. In Chapter 5, we introduced the idea of modeling a phone as a

…

finite state machine. If the phone is not being used, it is in state idle. If an incoming call arrives, it goes to state ringing. This does

…

; it could instead be an ATM cross-connect or the handling of data in a protocol stack. The gen_fsm module provides you with a finite state machine behavior that you can use to solve these problems. States are defined as callback functions that return a tuple containing the next State and

…

the updated loop data. You can send events to these states synchronously and asynchronously. The finite state machine callback module should also export the standard callback functions such as init, ter minate, and handle_info. As gen_fsm is a standard OTP

…

have well-defined behaviors and roles in the system. You should seriously consider using the OTP behaviors described in Chapter 12, including servers, event handlers, finite state machines, supervisors, and applications. When working with message passing, all messages should be tagged. It makes the order of clauses in the receive statement unimportant

…

properties of the system and then tests the properties for randomly generated input values. QuickCheck is also able to test properties of concurrent systems using finite state machines to exercise their behavior, and to shrink any data that fails to satisfy the property to minimal counterexamples. QuickCheck is a product of Quviq

…

, 168, 179 file module, 79 file2tab function, 226 filename module, 79 files/1 function, 405 fill/0 function, 375, 376 filter function, 191, 192, 196 finite state machines (see FSMs) firewalls, 261 first/1 function, 221 float/1 function, 54 floating-point division operator, 17 floats defined, 17 Erlang type notation, 397

…

, 369 format/2 function, 57, 101, 356 frequency module allocate function, 119, 123 deallocate function, 120, 124 init function, 121 Fritchie, Scott Lystig, 215 FSMs (finite state machines) busy state, 117 458 | Index chapter exercises, 138 offline state, 117 online state, 117 process design patterns, 117, 126–131, 290 fun2ms/1 function

Optimization Methods in Finance

by Gerard Cornuejols and Reha Tutuncu · 2 Jan 2006 · 130pp · 11,880 words

the proof of Theorem 3.2 can naturally be used for detection of arbitrage opportunities. However, as we discussed above, this argument works only for finite state spaces. In this section, we discuss how LP formulations can be used to detect arbitrage opportunities without limiting consideration to

…

finite state spaces. The price we pay for this flexibility is the restriction on the selection of the securities: we only consider the prices of a set

Turing's Cathedral

by George Dyson · 6 Mar 2012

storage, putting the cost of a cerebral cortex at £300 per annum—his King’s College fellowship for the year. Viewed as part of a finite-state Turing machine, the delay line represented a continuous loop of tape, 1,000 squares in length and making 1,000 complete passes per second under

…

microseconds count, they are closer, from the bottom up, in time. Meaning just seems to “come to mind” first. An Internet search engine is a finite-state, deterministic machine, except at those junctures where people, individually and collectively, make a nondeterministic choice as to which results are selected as meaningful and given

Monte Carlo Simulation and Finance

by Don L. McLeish · 1 Apr 2005

R, rbinom(m,n,p) will generate a vector of length m of Binomial(n, p) variates. Random Samples Associated with Markov Chains Consider a finite state Markov Chain, a sequence of (discrete) random variables X1 , X2 , . . .each of which takes integer values 1, 2, . . . N (called states). The number of states

…

states of a Markov chain, but we will give some examples of this later. For the present we restrict attention to the case of a finite state space. The transition probability matrix is a matrix P describing the conditional probability of moving between possible states of the chain, so that P [Xn

…

run the chain from an arbitrary starting value and then delete the initial transient. An alternative elegant method that is feasible at least for some finite state Markov chains is the method of “coupling from the past” due to Propp and Wilson (1996). We assume that we are able to generate transitions

Darwin Among the Machines

by George Dyson · 28 Mar 2012 · 463pp · 118,936 words

be stored in the millisecond it took a train of pulses to travel the length of a five-foot “tank.” Viewed as part of a finite-state Turing machine, the delay line represented a continuous loop of tape, a thousand squares in length and making a thousand complete passes per second under

…

of life, 29–30, 112, 177 incompleteness (mathematical), 49–50, 53–54, 70, 72, 78, 120, 167, 228 Industrial Revolution, 21–22, 134 infinity, and finite-state machines, 10, 35, 43, 56, 130, 190 information. see also bandwidth; bits; communication; cybernetics; telecommunication and cybernetics, 6, 98, 101 defined, by Bateson, 167 flow

Syntactic Structures

by Noam Chomsky · 17 Oct 2008

primitives were indepen dently defined, not a product of more basic semantic, functional or notional concepts (chapter 2), that they could not be fonnulated through finite-state Markov processes (chapter 3), and that restricting rule schemas to those of phrase structure grammars yielded clumsiness and missed insights and elegance which would be

…

produced i n this way. Any language that can be produced by a machine of this sort we call a finite state language ; and we can call the machine itself a finite state grammar. A finite state grammar can be represented graphically i n the form o f a "state diagram".l For example, the grammar

…

, and we can have any n umber of closed loops of any length. The machines that produce languages in this manner are known mathematically as "finite state Markov processes. " To complete this elementary communication theoretic model for language, we assign a probability to each transition from state to state. We can then

…

this point of view in the syntactic study of some language such as English or a formalized system of mathematics. Any attempt to construct a finite state grammar for E nglish runs into serious difficulties and complications at the very outset, as the reader can easily convince himself. However, i t is

…

, 1955), 02. 21 AN ELEMENTARY LINGUISTIC THEORY example, i n v iew o f the foIIowingmore general remark about English : (9) English is not a finite state language. That is, it is impossible, not just difficult, to construct a device of the type described above (a diagram such as (7) or (8

…

fol l owed by the identical string X, and only these. . . . We can easily show that each of these three la nguages is not a finite state language. Similarly, languages such as ( 1 0) where the a's and b's i n question are not consecutive, but are embedded i n

…

exhausting these possibili ties) will have all of the mirror image properties of ( I O ii) which exclude ( I O ii) from the set of finite state languages. Thus we can find various kinds of non3 See my "Three models for the descri;:>tion of language," I. R.E. Transaclions on lnjormalion

…

of (9). Notice in particular that the set of well-formed formulas of any formalized system of mathematics or logic will fail to wnstitute a finite state language, because of paired parentheses or equivalent restrictions. AN ELEMENTARY LINGUISTIC THEORY 23 fi nite state models within English. This is a rough indication of

…

than a mi llion words. Such arbitrary limitations serve no useful purpose, however. The point i s that there are processes of sentence formation that finite state grammars are i ntrinsically not equipped to handle. If these pro cesses have no finite limit, we can prove the literal inapplicabi l i ty

…

of this elementary theory. I f the processes have a limit, then the construction of a finite state grammar will not be litera lly out of the question, since it will be possible to list the sentences, and a list is essent ially

…

a trivial finite state grammar. But this grammar will be so complex that it will be of little use or i nterest. In general, the assumption that languages are

…

to simplify 24 SYNTACTIC STRUCTURES the description of these languages. If a grammar does not have recursive devices (closed loops, as in (8), in the finite state grammar) it will be prohibitively complex. If it does have recursive devices of some sort, it will produce infinitely many sentences. In short, the approach

…

to the analysis of grammaticalness suggest ed here in terms of a finite state M arkov process that produces sentences from left to right, appears to lead to a dead end just as surely as the proposals rejected in

…

simple linear method of representation, but to generate at least one such level from left to right by a device with more capacity than a finite state Markov process. There are so many difficulties with the notion of linguistic level based on left to right generation, both in terms of complexity of

…

manner i n terms o f a single level (i.e., if it is a finite state language) then this description may i ndeed be simplified by construction of such higher levels ; but to generate non-finite state languages such as English we need fundamentally different methods, and a more general concept of "linguistic

…

this approach any further. The grammars that we discuss below that do not generate from left to right also correspond to processes less elementary than finite state Markov processes. But they are perhaps less powerful than the kind of device that would be required for direct left-to-right generation of English

…

formal way in terms of the associated diagrams. 4.2 In § 3 we considered languages, calJed "fin i tt: state languages", which were generated by finite state M arkov processes. Now we are considering terminal languages that are generated by systems of the form [L, Fl. These two types of languages are

…

related in the fol lowing way Theorem : Every finite state language is a term inal language, but there are terminal languages which are not finite state languages.4 The import of this theorem is that description in terms of phrase structure is essentially more

…

powerful than description in terms of the elementary theory presented above in § 3. As examples of terminal languages that are not finite state l anguages we have the languages ( ! o i), ( 1 0 ii) discussed in § 3. Thus the language ( ! o i), consisting of all and only the

…

n § 3 we pointed out that the languages ( l O i) and ( l O ii) corre spond to subparts of English, and that therefore the finite state M arkov process model is not adequate for English. We n ow see that the phrase structure model does not fail in such cases. We

…

more powerful than description i n terms of phrase structure, j ust as the latter is essentially more powerfull than description i n terms of finite state Markov processes that generate sentences from left to right. In particular, such languages as ( l O iii) which lie beyond the bounds of phrase structure

…

the notion of statistical order of approximation). In carrying out this independent and formal study, we find that a simple mode! of language as a finite state M arkov process that produces sentences from left to right is not acceptable, and that such fairly abstract l inguistic levels as phrase structure and

Masterminds of Programming: Conversations With the Creators of Major Programming Languages

by Federico Biancuzzi and Shane Warden · 21 Mar 2009 · 496pp · 174,084 words

domain. How do you make the idea of syntax-driven transformations accessible to users who might not know very much or anything at all about finite-state machines and push-down automata? Al: Certainly as a user of AWK, you don’t need to know about these concepts. On the other hand

…

, if you’re into language design and implementation, knowledge of finite-state machines and context-free grammars is essential. Should a user of lex or yacc understand the context-free grammar even if the programs they produce

…

don’t require their users to understand them? Al: Most users of lex can use lex without understanding what a finite-state machine is. A user of yacc is really writing a context-free grammar, so from that perspective, the user of yacc certainly gets to appreciate

…

, particularly regular expressions and context-free grammars, for describing the important syntactic features of programming languages. The automata that recognize these formal languages, such as finite-state machines and push-down automata, can serve as models for the algorithms used by compilers to scan and parse programs. Perhaps the greatest benefit of

…

is currently an active research area. Many researchers are exploring parallel hardware and software implementations of pattern-matching algorithms like the Aho-Corasick algorithm or finite-state algorithms. Some of the strong motivators are genomic analyses and intrusion detection systems. What motivated you and Corasick to develop the Aho-Corasick algorithm? Al

Possible Minds: Twenty-Five Ways of Looking at AI

by John Brockman · 19 Feb 2019 · 339pp · 94,769 words

analog components like vacuum tubes were repurposed to build digital computers in the aftermath of World War II. Individually deterministic finite-state processors, running finite codes, are forming large-scale, nondeterministic, non-finite-state metazoan organisms running wild in the real world. The resulting hybrid analog/digital systems treat streams of bits collectively, the

Applied Cryptography: Protocols, Algorithms, and Source Code in C

by Bruce Schneier · 10 Nov 1993

Types and Programming Languages

by Benjamin C. Pierce · 4 Jan 2002 · 647pp · 43,757 words

A Discipline of Programming

by E. Dijkstra · 15 Feb 1976 · 232pp

On Language: Chomsky's Classic Works Language and Responsibility and Reflections on Language in One Volume

by Noam Chomsky and Mitsou Ronat · 26 Jul 2011

Language and Mind

by Noam Chomsky · 1 Jan 1968

The Logician and the Engineer: How George Boole and Claude Shannon Created the Information Age

by Paul J. Nahin · 27 Oct 2012 · 229pp · 67,599 words

The Language Instinct: How the Mind Creates Language

by Steven Pinker · 1 Jan 1994 · 661pp · 187,613 words

Monadic Design Patterns for the Web

by L.G. Meredith · 214pp · 14,382 words

The Architecture of Open Source Applications

by Amy Brown and Greg Wilson · 24 May 2011 · 834pp · 180,700 words

ZeroMQ

by Pieter Hintjens · 12 Mar 2013 · 1,025pp · 150,187 words

Darwin's Dangerous Idea: Evolution and the Meanings of Life

by Daniel C. Dennett · 15 Jan 1995 · 846pp · 232,630 words

Principles of Protocol Design

by Robin Sharp · 13 Feb 2008

The Science of Language

by Noam Chomsky · 24 Feb 2012

Reactive Messaging Patterns With the Actor Model: Applications and Integration in Scala and Akka

by Vaughn Vernon · 16 Aug 2015

Data Mining: Concepts and Techniques: Concepts and Techniques

by Jiawei Han, Micheline Kamber and Jian Pei · 21 Jun 2011

The Art of Scalability: Scalable Web Architecture, Processes, and Organizations for the Modern Enterprise

by Martin L. Abbott and Michael T. Fisher · 1 Dec 2009

The Demon in the Machine: How Hidden Webs of Information Are Finally Solving the Mystery of Life

by Paul Davies · 31 Jan 2019 · 253pp · 83,473 words

Natural language processing with Python

by Steven Bird, Ewan Klein and Edward Loper · 15 Dec 2009 · 504pp · 89,238 words

Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems

by Martin Kleppmann · 17 Apr 2017

When Computers Can Think: The Artificial Intelligence Singularity

by Anthony Berglas, William Black, Samantha Thalind, Max Scratchmann and Michelle Estes · 28 Feb 2015

Doing Data Science: Straight Talk From the Frontline

by Cathy O'Neil and Rachel Schutt · 8 Oct 2013 · 523pp · 112,185 words

The Joy of Clojure

by Michael Fogus and Chris Houser · 28 Nov 2010 · 706pp · 120,784 words

Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems

by Martin Kleppmann · 16 Mar 2017 · 1,237pp · 227,370 words

Being Geek: The Software Developer's Career Handbook

by Michael Lopp · 20 Jul 2010 · 336pp · 88,320 words

Clojure Programming

by Chas Emerick, Brian Carper and Christophe Grand · 15 Aug 2011 · 999pp · 194,942 words

The Friendly Orange Glow: The Untold Story of the PLATO System and the Dawn of Cyberculture

by Brian Dear · 14 Jun 2017 · 708pp · 223,211 words

The Mythical Man-Month

by Brooks, Jr. Frederick P. · 1 Jan 1975 · 259pp · 67,456 words

Relevant Search: With Examples Using Elasticsearch and Solr

by Doug Turnbull and John Berryman · 30 Apr 2016 · 593pp · 118,995 words

JPod

by Douglas Coupland · 30 Apr 2007 · 487pp · 95,085 words

Mastering ElasticSearch

by Rafal Kuc and Marek Rogozinski · 14 Aug 2013 · 480pp · 99,288 words

High-Frequency Trading

by David Easley, Marcos López de Prado and Maureen O'Hara · 28 Sep 2013

Accelerando

by Stross, Charles · 22 Jan 2005 · 489pp · 148,885 words

Europe: A History

by Norman Davies · 1 Jan 1996

The Practice of Cloud System Administration: DevOps and SRE Practices for Web Services, Volume 2

by Thomas A. Limoncelli, Strata R. Chalup and Christina J. Hogan · 27 Aug 2014 · 757pp · 193,541 words

Writing Effective Use Cases

by Alistair Cockburn · 30 Sep 2000

Speaking Code: Coding as Aesthetic and Political Expression

by Geoff Cox and Alex McLean · 9 Nov 2012

Geek Sublime: The Beauty of Code, the Code of Beauty

by Vikram Chandra · 7 Nov 2013 · 239pp · 64,812 words

C++ Concurrency in Action: Practical Multithreading

by Anthony Williams · 1 Jan 2009 · 818pp · 153,952 words

The Cultural Logic of Computation

by David Golumbia · 31 Mar 2009 · 268pp · 109,447 words

Natural Language Annotation for Machine Learning

by James Pustejovsky and Amber Stubbs · 14 Oct 2012 · 502pp · 107,510 words

Design Patterns: Elements of Reusable Object-Oriented Software (Joanne Romanovich's Library)

by Erich Gamma, Richard Helm, Ralph Johnson and John Vlissides · 18 Jul 1995

New Laws of Robotics: Defending Human Expertise in the Age of AI

by Frank Pasquale · 14 May 2020 · 1,172pp · 114,305 words

Clean Code: A Handbook of Agile Software Craftsmanship

by Robert C. Martin · 1 Jan 2007 · 462pp · 172,671 words

Practical Vim: Edit Text at the Speed of Thought

by Drew Neil · 6 Oct 2012 · 722pp · 90,903 words

Practical Vim, Second Edition (for Stefano Alcazi)

by Drew Neil

Practical Vim

by Drew Neil

Learn Python the Hard Way

by Zed Shaw · 1 Jan 2010 · 249pp · 45,639 words

Wireless

by Charles Stross · 7 Jul 2009

Fuller Memorandum

by Stross, Charles · 14 Jan 2010 · 366pp · 107,145 words

The Tangled Web: A Guide to Securing Modern Web Applications

by Michal Zalewski · 26 Nov 2011 · 570pp · 115,722 words

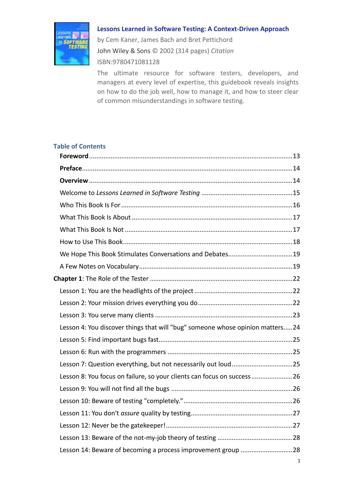

Lessons-Learned-in-Software-Testing-A-Context-Driven-Approach

by Anson-QA

Our Final Invention: Artificial Intelligence and the End of the Human Era

by James Barrat · 30 Sep 2013 · 294pp · 81,292 words

Pragmatic.Programming.Erlang.Jul.2007

by Unknown

The Art of UNIX Programming

by Eric S. Raymond · 22 Sep 2003 · 612pp · 187,431 words

Introducing Elixir

by Simon St.Laurent and J. David Eisenberg · 20 Dec 2016

Solr in Action

by Trey Grainger and Timothy Potter · 14 Sep 2014 · 1,085pp · 219,144 words

Elixir in Action

by Saša Jurić · 30 Jan 2019