Human + Machine: Reimagining Work in the Age of AI

by Paul R. Daugherty and H. James Wilson · 15 Jan 2018 · 523pp · 61,179 words

is equipped with vision sensors and laser scanners. In aggregate, they join forces to manufacture on the go.b At Inertia Switch, robotic intelligence and sensor fusion enable robot-human collaboration. The manufacturing firm uses Universal Robotics’ robots, which can learn tasks on the go and can flexibly move between tasks, making

…

. (For another example of experimentation in a retail setting, see the sidebar “Controlled Chaos.”) Build-Measure-Learn The technologies that power Amazon Go—computer vision, sensor fusion, and deep learning—are systems very much under development. Limitations include cameras that have a hard time tracking loose fruits and vegetables in a customer

Autonomous Driving: How the Driverless Revolution Will Change the World

by Andreas Herrmann, Walter Brenner and Rupert Stadler · 25 Mar 2018

183 Early in 2016, Nvidia surprisingly announced its own computing platform for controlling autonomous vehicles. This platform has sufficient processing power to support deep learning, sensor fusion and surround vision, all of which are key elements for a self-driving car. It also announced that its PX2 would be used as a

The History of the Future: Oculus, Facebook, and the Revolution That Swept Virtual Reality

by Blake J. Harris · 19 Feb 2019 · 561pp · 163,916 words

orientation tracking, it is all very new to me. But I am beginning to better understand what needs to be done; I started reading about sensor fusion.” Sensor fusion is, literally, the process of fusing together data from multiple sensors. Specifically, Antonov was referring to the need for a VR headset to track head

…

that we’ve developed our own motion tracker sensor chips . . . [which] gives us better data, more samples to work with when we’re doing our sensor fusion, so we can get better tracking overall; and most importantly, because it’s running at 1000 Hz (instead of 250 Hz) and it’s four

Life as a Passenger: How Driverless Cars Will Change the World

by David Kerrigan · 18 Jun 2017 · 472pp · 80,835 words

recognition and Artificial Intelligence. These need to be combined to achieve a solution that can begin to be credible as a substitute for human control. Sensor Fusion As human drivers, we rely primarily on our eyes for inputs, while our brains analyse the situation and direct the muscles in our legs and

…

eye, so most driverless cars rely on an approach of adding multiple types of sensor to vehicles and combining their inputs using a technique called Sensor Fusion. The following illustration shows a prototype driverless car, and the position of the various sensors that contribute to the overall ability of the car to

…

its customer experience because it builds both the software and the hardware for its product suite, Waymo can claim tighter integration between its sensor hardware, sensor fusion software, image recognition and other aspects of its self-driving system. Waymo also claims individual performance benefits in each of its new sensors, including vision

Arriving Today: From Factory to Front Door -- Why Everything Has Changed About How and What We Buy

by Christopher Mims · 13 Sep 2021 · 385pp · 112,842 words

phone’s camera and screen—that’s accomplished largely through SLAM. TuSimple’s system relies on “sensor fusion,” as the last step of localizing the truck in space. In the continuum from data to knowledge, sensor fusion is the part of perception in which information from all of a machine’s—or our own

…

—senses is collected and synchronized. It’s essentially the creation of an internal consensus reality. For the purposes of a self-driving truck, sensor fusion is the process by which data from all those different sensors—the IMUs, GPS, cameras, lidar, and radar—are brought together in a single virtual

A Thousand Brains: A New Theory of Intelligence

by Jeff Hawkins · 15 Nov 2021 · 253pp · 84,238 words

world is uniform and complete. The hierarchy of features theory can’t explain how this happens. This problem is called the binding problem or the sensor-fusion problem. More generally, the binding problem asks how inputs from different senses, which are scattered all over the neocortex with all sorts of distortions, are

Age of Context: Mobile, Sensors, Data and the Future of Privacy

by Robert Scoble and Shel Israel · 4 Sep 2013 · 202pp · 59,883 words

, or even to check if your spouse left the toilet seat up. Melanie Martella, Executive Editor of Sensors magazine, introduced us to the concept of sensor fusion, a fast-emerging technology that takes data from disparate sources to come up with more accurate, complete and dependable data

…

. Sensor fusion enables the same sense of depth that is available in 3D modeling, which is used for all modern design and construction, as well as the

Amazon: How the World’s Most Relentless Retailer Will Continue to Revolutionize Commerce

by Natalie Berg and Miya Knights · 28 Jan 2019 · 404pp · 95,163 words

Amazon Go Retail First checkout-free store. Shoppers scan their Amazon app to enter. The high-tech convenience store uses a combination of computer vision, sensor fusion and deep learning to create a frictionless customer experience. No 2019 and beyond Fashion or furniture stores would be a logical next step NOTE Amazon

…

most friction-filled process of any store-based shopping journey, ie checkout, affords the customer unprecedented speed and simplicity. Autonomous computing: AI-based computer vision, sensor fusion and deep learning technologies power Amazon Go’s Just Walk Out technology. Just Walk Out technology operates without manual intervention, eliminating the need for checkout

Army of None: Autonomous Weapons and the Future of War

by Paul Scharre · 23 Apr 2018 · 590pp · 152,595 words

into avoiding enemy targets and attacking false ones. In the near term, the best chances for high-reliability target recognition lie with the kind of sensor fusion that DARPA’s CODE project envisions. By fusing together data from multiple angles and multiple types of sensors, computers could possibly distinguish between military targets

Robot, Take the Wheel: The Road to Autonomous Cars and the Lost Art of Driving

by Jason Torchinsky · 6 May 2019 · 175pp · 54,755 words

of these different forms of world sensing are combined to create an overall, composite image of the surrounding reality for the car. Some call this “sensor fusion,”³³ and it’s especially important because each method, individually, has some pretty significant flaws and limitations that could cause real problems in practice. Supplementing the

Architects of Intelligence

by Martin Ford · 16 Nov 2018 · 586pp · 186,548 words

Data Mining in Time Series Databases

by Mark Last, Abraham Kandel and Horst Bunke · 24 Jun 2004 · 205pp · 20,452 words

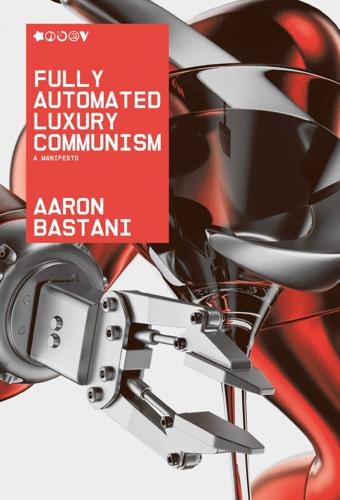

Fully Automated Luxury Communism

by Aaron Bastani · 10 Jun 2019 · 280pp · 74,559 words